Gradio is a Python package for quickly building web applications. With just a little code, you can develop applications.

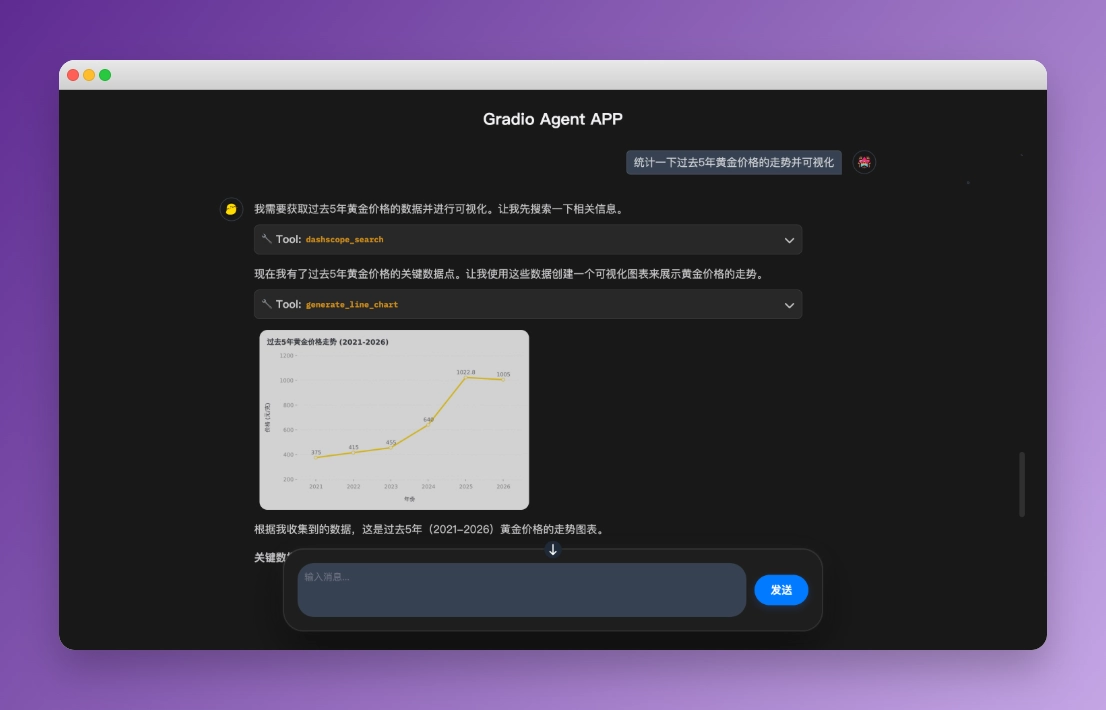

I developed an Agent application using Gradio. The result is as follows\(`Δ’)/

It supports web search, chart plotting, code execution, multi-step planning, automatic long-context compression ...

Anyone who has used Gradio knows that its frontend is not easy to adjust. Getting it to this state may not be perfect, but it’s already a minimal and usable shell. There’s nothing new on the backend; it mainly uses various technologies introduced in this guide, such as tools, MCP, middleware, multi-agent systems, and context engineering. The only pitfall I felt was the streaming output and incremental rendering of the Agent. To understand how LangChain handles asynchronous event streams, I spent a lot of time writing unit tests. Fortunately, it was all worth it, and I’m satisfied with the final product. Of course, the happiest part was getting my friends to use this application ✧(>o<)ノ✧

1. Feature Showcase¶

Below are some case studies to see how the Agent answers questions!

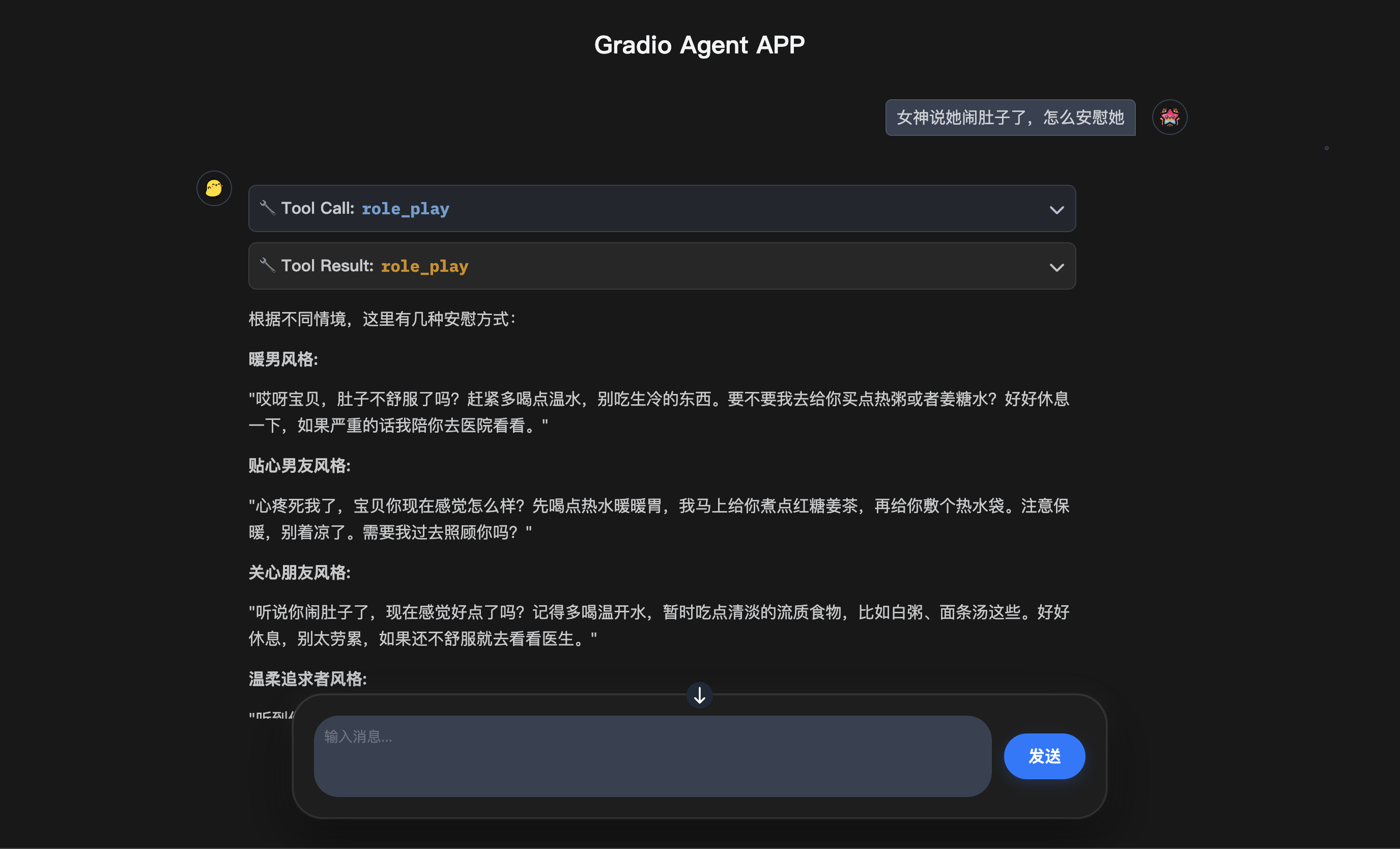

1.1 Role Play¶

| Test Case | My crush says she has a stomachache, how do I comfort her |

| Tool Called | role-play (Function: Generate responses in various personas) |

| Self-Evaluation | ⭐⭐⭐ “Using AI makes it less genuine” |

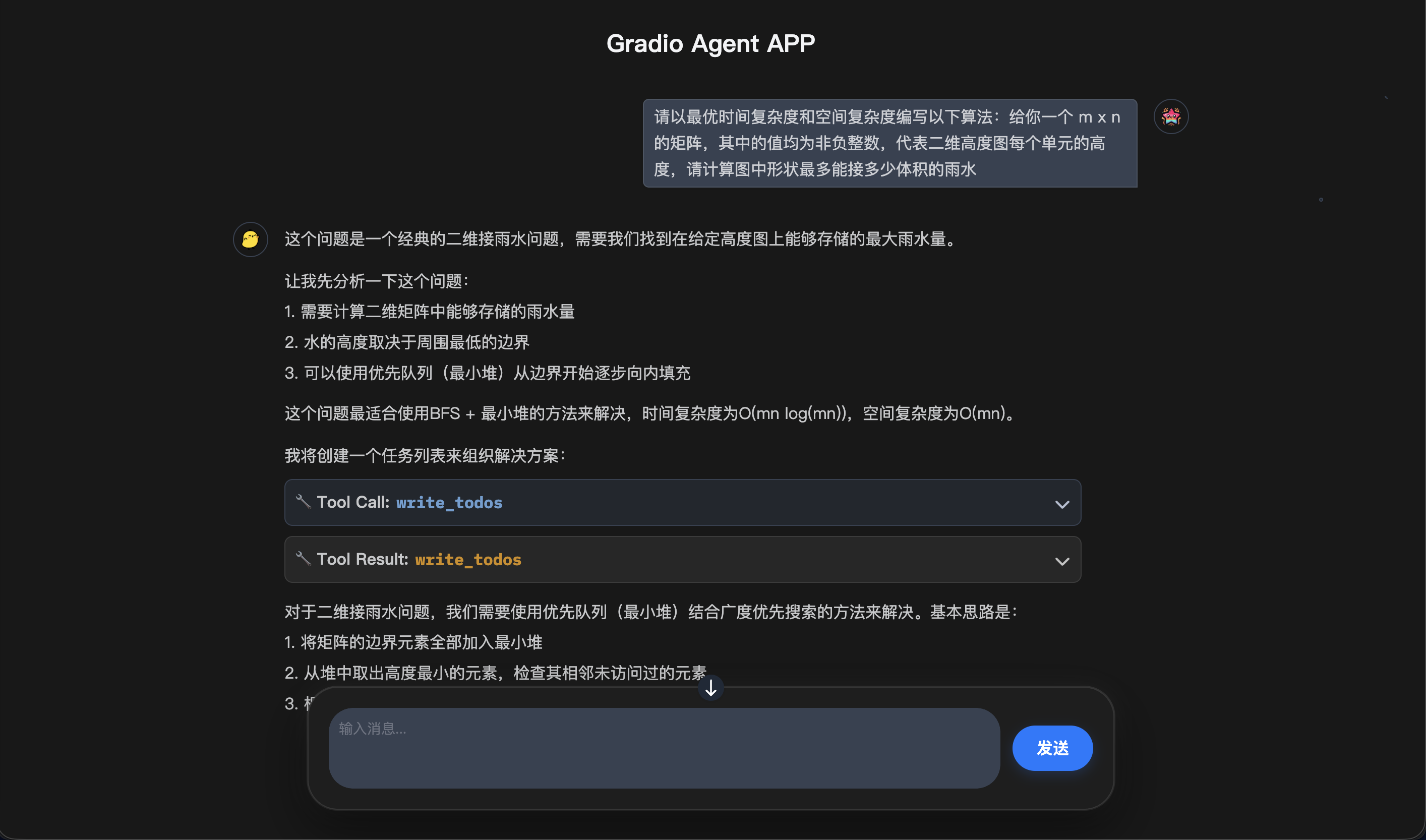

1.2 Code Execution¶

| Test Case | 3D Trapping Rain Water |

| Tool Called | code-execution (Function: Execute Python code) |

| Self-Evaluation | ⭐⭐⭐⭐⭐ “AC on first try, and beat 88% of users” |

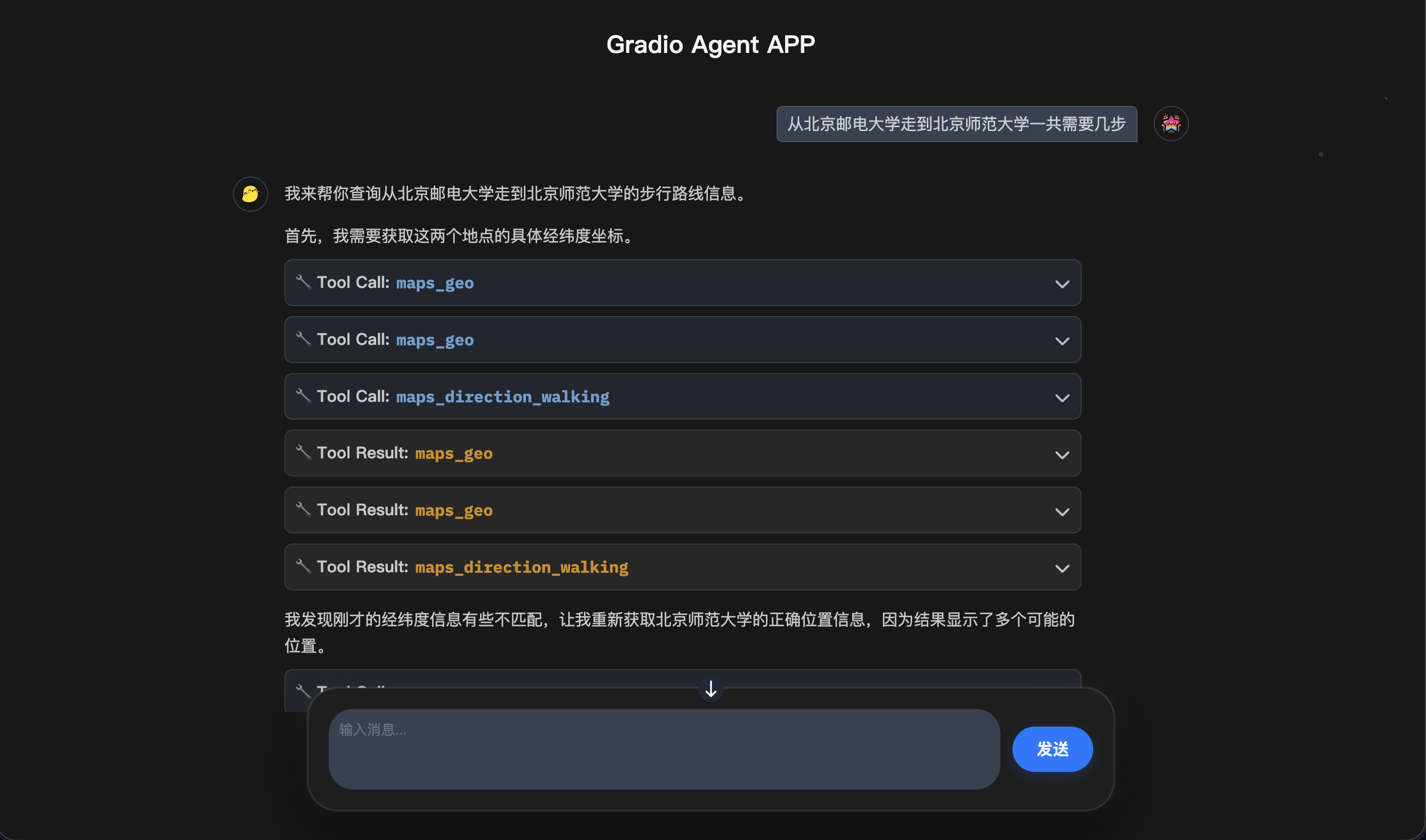

1.3 Amap Maps¶

| Test Case | How many steps from Beijing University of Posts and Telecommunications to Beijing Normal University |

| Tool Called | amap-maps (Function: Get Amap travel data) |

| Self-Evaluation | ⭐⭐⭐⭐ “The answer is three steps: apply to Beijing Normal University, take the exam, and get the admission notice” |

Want to unlock more interesting cases? Deploy this APP and try more questions!

2. How to Deploy¶

GitHub Links 👇

Main Project (This Tutorial): dive-into-langgraph

Sub Project (This APP): dive

-into -langgraph /app

2.1 Download Code¶

Clone the repository to your local machine:

git clone https://github.com/luochang212/dive-into-langgraph.gitEnter the project directory:

cd dive-into-langgraph/app2.2 Configure Environment Variables¶

First, create the .env file:

cp .env.example .envThen register an account at Alibaba Cloud Bailian, get your API_KEY, and configure it in the .env file.

2.3 Start the Agent Application¶

Before starting, please ensure your Python ≥ 3.10

python -VThis Web APP provides three startup methods, there’s always one that suits you.

💻 Python Startup

1. Install dependencies

pip install -r requirements.txt2. Start the application

python app.py🚀 UV Startup

If you want to use the locked package versions in pyproject.toml, you can use uv to start.

1. Install uv

pip install uv -U2. Sync virtual environment using uv

uv sync3. Start the application

uv run python app.py📦 Docker Compose Startup

Use the following command to start the Docker container:

docker compose up -dAfter initialization, you can access it in your browser at: http://

3. Afterword¶

In the article “Alibaba Releases New Quick BI, Talking About ChatBI’s Underlying Architecture, Interaction Design, and Cloud Computing Ecosystem”, I wrote some thoughts about Agents:

Any product based on Agent technology is difficult to escape the limitations of current Agent technology.

What are the limitations? Let me give a few examples:

Long-term Memory: Refine useful conversations, forget useless ones, and improve Q&A effectiveness across conversations

Verification Capability: Having memory alone is not enough; you also need to learn to judge, which is the ability of Verification

Knowledge System: After having verification capabilities, you also need to use them to process memories into knowledge

Long-term memory, verification capability, knowledge system ... Half a year has passed, and progress on these things is still limited. But I’m quite optimistic; I think they are solvable in engineering, it’s just a matter of how much they can generalize in the end.

Ten years ago, could you have imagined today’s LLM? In fact, we have already obtained legendary-level technology and are approaching the truth of human intelligence at an unprecedented acceleration. It’s just that whether this wave of technology can lead to AGI is still unknown. We who are in the midst of it are like characters driven by reinforcement learning, who must personally climb over mountains and valleys to get a signal about the direction forward.

I think the probability of AGI in the short term is small, because human intelligence is not so easily hacked. As long as AI’s generalization is not as good as humans, human skills will spontaneously shift to places that have not yet been generalized. The good news is that work still needs us; the bad news is that we still need to work.

With longing in heart, tread on plain shoes. Looking forward to meeting you again in a world where work is not needed ૮₍˶˘~˘˶₎ა

— 2026-01-14 · Night in Beijing